Scripting¶

Overview ¶

Exosite's Murano platform is an event-driven system that uses scripts to route data and perform application logic and rules. These scripts have a rich set of capabilities and are used to perform such actions as storing device data into a time series data store, offloading processing from your devices, and handling Application Solution API requests. These scripts have access to all of the Murano services.

Scripts are written in Lua, on the LuaJIT VM, which is Lua 5.1 with some 5.2 features. For general information about Lua 5.1, please refer to the online Lua manual.

Scripts may be added to a custom Murano Applications and IoT Connectors by using either the Exosite business account web UI \(Advanced Manage access\) or by using Murano CLI.

Warning

Scripting for the Murano platform is available for Advanced business accounts with the ability to create custom Applications and IoT Connectors.

Service Calls ¶

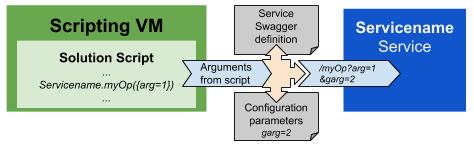

Besides native Lua scripting, Murano scripts offers direct access to high levels functionalities through Micro-Services integration. To use them, once you have the desired service enabled in your solution, you just need to make a script function call using the Capitalized service name as global reference and the operation name as function.

Murano Services can also provide configuration parameters configurable from the Murano User-Interface under the Services tab. Those parameters will then be used automatically for interacting with the service. This prevent for instance the need of passing secret credentials through the scripting environment.

You can also call a service using the murano.service_call() function.

Example

To send an email, the Email Service send operation can be called from the script with:

local result = Email.send({to="my@friend.com", subject="Hello", text="World"})

The Email service expose configuration parameters to set custom SMTP server. If configured those parameters will be automatically provided when calling the service.

Error handling ¶

If for any reason the service call failed, the following Lua Table is returned to the script

{

"error": "Error details, typically the Service response as a string",

"status": 400, -- The status code

"type": "QueryError" -- Either QueryError or ServerError

}

If the service call responds with a 204 HTTP status code, the following Lua Table is returned to the script:

{

"status":204

}

Service Call Costs / Usage¶

Service compute resource usage is aggregated to the global solution processing_time usage metric along with the Lua scripting CPU time.

You can profile your service compute usage with the murano.runtime_stats() function.

Mind that many services also report other usage metrics such as the storage size.

Script Execution ¶

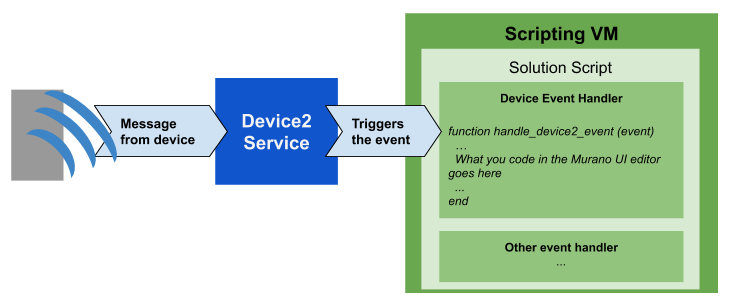

Murano Lua scripts are executed in reaction to system events, which are defined by Murano services.

Example: The Device2 service emits the event event whenever a message is sent by a device to the Device API.

Service events will trigger the execution of the matching event handler script defined in your application script. Events and their payload are documented in their respective Micro-Service page.

To get a try, use the script editor under the Services tab of the Murano Solution page to define event scripts (Eg. the Timer service timer event). You can also define the event behavior using the Murano CLI or Murano Template in the /services/<Service alias>_<event name>.lua.

Cluster Behavior ¶

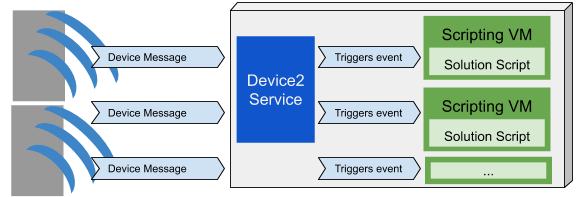

Each events will trigger the related script execution in a unique separated Virtual Machine which ensure full isolation in the system. This means multiple scripts of your solution may be executed at the same time which enables your application to scale horizontally based on the system load.

Event Handler Context¶

During execution, event handler scripts are wrapped in a handler function corresponding to the Murano Services event. The event handler function will expose arguments defined by the Murano service event.

Tip

Murano event handler scripts share the same context within an application. Therefore, the use of the local keyword is highly recommended for every function and variable definitions to avoid potential name collision.

Example¶

Incoming data from an IoT device are triggered by the Device service datapoint event which defines an event parameter for the event handler context. The wrapper function will therefore be:

function handle_device2_datapoint (event)

-- Here goes your device data handler script

end

So when your event handler script defines:

print(event.type)

The final execution script will look like the following:

function handle_device_datapoint (event)

print(event.type)

end

API Endpoint Scripts¶

For convenience, Murano offers the option to build your custom HTTP API by defining endpoint scripts.

Endpoint scripts are automatically wrapped in a simple routing mechanism based on the Webservice Murano service set within the event handler for the "request" event. As for a regular Service event handler, the script will receive the request event arguments containing the HTTP request data.

An extra response argument is provided to the endpoint script context allowing a compact response syntax.

The response object content:

| attribute | type | default value | description |

|---|---|---|---|

| code | integer | 200 | The response HTTP status code. If any exception occurs, a 500 is returned. |

| message | string or table | "Ok" | The HTTP response body. If a Lua table is given, the routing wrapper will automatically encode it as JSON object. |

| headers | Table of string | "content-type" = text/plan or application/json | The HTTP response headers depend on message type. |

Example¶

response.headers = {} -- optional

response.code = 200 -- optional

response.message = "My response to endpoint " .. request.uri

or

return "My response to endpoint " .. request.uri

Endpoints Functions Context¶

Under the hood, endpoints scripts are stored in an endpoints table used by the Webservice "request" event handler. So the final API script will be:

local _endpoints = {

["get_/myendpoint"] = function (request, response)

-- endpoint script

return "My response to endpoint " .. request.uri

end

}

function handle_webservice_request(request)

... -- default routing mechanism

end

Websocket Endpoints ¶

Websocket endpoints are handled in a similar manner as webservice endpoints based on Websocket Murano service. The function context includes the websocketInfo as well as a response arguments. The response.message content will be automatically sent back to the websocket channel.

response.message = "Hello world"

Or you can directly pass the message as function result:

return "Hello world"

In addition, you can also interact with the websocket channel with the following functions:

websocketInfo.send("Hello") -- Send a message to the channel.

websocketInfo.send("world") -- Useful to send back multiple messages.

websocketInfo.close() -- Close the websocket connection

Websocket Endpoints Functions Context ¶

Similar to the webservice endpoints, websockets are stored in the _ws_endpoints table. And final script at execution will be:

local _ws_endpoints = {

["/mywebsocketendpoint"] = function (websocketInfo, response)

-- websocket endpoint script

return "My response to endpoint " .. request.uri

end

}

function handle_websocket_websocket_info(websocketInfo)

... -- default routing mechanism

end

Modules¶

Murano recommends the use of a reusable block of Lua code. For this purpose, you can define Lua modules under the modules/ directory of your project.

Murano modules follow Lua standard and should end with a return statement returning the module object. The module can then be accessed by other scripts using the lua require "moduleName" keyword.

Example Module¶

local moduleObject = { variable = "World"}

function moduleObject.hello()

return moduleObject.variable

end

return moduleObject

Usage in Scripts

local moduleInstance = require "myModule"

moduleInstance.hello() -- -> "World"

To Keep in Mind About Modules ¶

- Naming: To avoid confusion with Murano services, module names need to start with a lowercase letter. Module names can contain '.' to represent the source file folder structure. The module can then be used with

lua require "path.to.module" - Context isolation: Murano modules are executed in the same namespace as other script of the application. The use of local keyword is highly recommended for every function and variable definitions to avoid potential isolation issues.

Deprecated Behavior ¶

For compatibility with earlier versions of Murano, following non-standard is still supported but will get removed in a future release and should be avoided.

Module Constructor Call ¶

Direct module constructor can be accessed directly without the lua require call.

Usage Example

local moduleInstance = myModule()

moduleInstance.hello() -- -> "World"

Module without return Statement ¶

Legacy module definitions which omit the return statement are directly pre-pended to the application script which requires the following considerations:

- Isolation: As top-level script, the module script does not have any specific isolation.

- Loading order: The modules loading order is not ensured and cross-module calls should be avoided.

- Naming: The module name is irrelevant. Variable and function names will directly be accessible for other non-module scripts.

- Non-functional script: Module scripts which do not contain any functions are considered standard Lua modules with a

lua return nilstatement.

Deprecated module example:

myModule = { variable = "World"} -- This is a global definition

function myModule.hello()

return myModule.variable

end

Usage

myModule.hello() -- -> "World"

Troubleshooting ¶

The Lua script execution is recorded in the application logs and is accessible through the application management console under the Logs tab. To emit log from lua scripting see the log section.

The more detail description is available in the Solution Debug logs

Script Environment ¶

Scripts are executed in their own sandboxed instance of the Lua VM to keep them isolated from each other. Each script has access to all Murano Services, but access to those services is authenticated based on your Application.

Limits ¶

Each script execution is resource constrained with following limitations:

- 20 MB of memory per execution

- 10 seconds of CPU time per event execution. This value is a component of your solution wide processing_time usage along with services usage.

- There is no real time clock limitation

In cases where either limit is exceeded, your script will immediately fail with an error message explaining why.

You can profile your script resource usage with the murano.runtime_stats() function.

If you are curious how Lua 5.1 memory management works, please see the following references:

Native Libraries¶

The following global Lua tables and functions are available to Lua scripts. They operate exactly as described in the Lua 5.1 reference manual.

Basic Functions(Note: thedofilefunction is NOT available to scripts.)string(Note: thestring.dumpfunction are NOT available to scripts.)tablemathos(Note: Onlyos.difftime,os.date,os.time,os.clock,os.getenvfunction are available to scripts.)

Lua Number Type¶

Lua Number is following IEEE 754 64-bit double-precision floating point. Largest power of ten: a 64-bit double can represent all integers exactly, up to about 1,000,000,000,000,000 \(actually - 2^52 ... 2^52 - 1\).

Murano libraries¶

In addition to the Lua system resources, the following global features are available to Lua scripts:

json¶

to_json¶

Converts a Lua table to a JSON string. This function is multi-return, the first value being the result, and the second value being an error value. If the error value is nil, then the conversion was successful, and the result can be used safely.

If non-nil, the error value will be a string.

local jsonString, err = to_json({})

if err ~= nil then

print(err)

end

-- Or directly

local jsonString = to_json({})

Since items with nil values in a Lua table effectively don't exist, you should use json.null as a placeholder value if you need to preserve null indices in your JSON string.

local t = {

name1 = "value1",

name2 = json.null

}

local jsonString = to_json(t)

print(jsonString)

--> {"name1":"value1","name2":null}

from_json¶

Converts a JSON string to a Lua table. This function is multi-return, the first value being the result, and the second value being an error value. If the error value is nil, then the conversion was successful, and the result can be used safely.

If non-nil, the error value will be a string.

local luaTable, err = from_json("{}")

if err ~= nil then

print(err)

end

-- Or directly

local luaTable = from_json("{}")

By default, JSON nulls are decoded to Lua nil and treated by Lua in the normal way. When the optional argument {decode_null=true} is used, null is interpreted as json.null within the table. This is useful if your data contains items which are "null" but you need to know of their existence \(in Lua, table items with values of nil don't normally exist\).

local luaTable = from_json("[1, 2, 3, null, 4, null]", {decode_null=true})

print(#luaTable)

--> 6

json.null¶

`json.is_null()``

Finds whether a variable is json.null

print(json.is_null(json.null))

--> true

print(json.is_null("test"))

--> false

json.stringify()¶

This functions the same as to_json

local jsonString, err = json.stringify({})

if err ~= nil then

print(err)

end

-- Or directly

local jsonString = json.stringify({})

local t = {

name1 = "value1",

name2 = json.null

}

local jsonString = json.stringify(t)

print(jsonString)

--> {"name1":"value1","name2":null}

json.parse()¶

This functions the same as from_json

local luaTable, err = json.parse("{}")

if err ~= nil then

print(err)

end

-- Or directly

local luaTable = json.parse("{}")

local luaTable = json.parse("[1, 2, 3, null, 4, null]", {decode_null=true})

print(#luaTable)

--> 6

bench¶

bench.measure()

Returns the elapsed time to nanosecond precision as a human readable string. It may be used to do optimization of application code. For example here's how to measure how long some code in a application endpoint took to run.

elapsed = bench.measure(function ()

-- do a couple things

end)

return elapsed -- returns, e.g., "122.145329ms"

bench.measure() will also return any parameters the function returns before the elapsed time. For example:

a, b, elapsed = bench.measure(function ()

return "foo", 2 -- you can return how ever many values you want, adjust the assignment accordingly

end)

print({a=a, b=b, elapsed=elapsed}) -- results in printing `map[a:foo,b:2,elapsed:44.406µs]`

sync_call¶

This function enables a simple strategy for preventing the competition of critical section. A synchronized section will ensure no concurrent execution of that code are executed. An optional timeout \(millisecond\) argument is available to define how long to wait for previous execution of that section to be completed. \(10000 ms by default and 30000ms maximum\)

The signature is sync_call(lockId, [timeout,] function[, args, ..])

If there are no errors, sync_call returns true, plus any values returned by the call. Otherwise, it returns false, plus the error message table.

function transaction (key, name)

local data = from_json(Keystore.get{key=key}.value)

data.name = name

Keystore.set{key = key, value = to_json(data)}

end

local arg1 = "userdata_123"

local arg2 = "Bortus"

local status, result_or_err = sync_call("locker_id", 1000, transaction, arg1, arg2)

context¶

Provides execution context informations:

print(context) -- Map containing the context information

Available keys

- solution_id - The Id of the solution context of the script execution

- service - The service Id triggering the execution

- service_type - The type of triggering service. One of: core, exosite, exchange, product, solution

- script_key - The triggering service custom Key. Used as alias when the service Id is a generated string

- event - Event name

- caller_id - In case of solution-to-solution communication, the caller solution Id

log¶

This set of functions provides helpers to emit logs by severity level. Every severity function's behavior is similar are the print function.

log.emergency(msg1, msg2...) -- severity=0

log.alert(msg1, msg2...) -- severity=1

log.critical(msg1, msg2...) -- severity=2

log.error(msg1, msg2...) -- severity=3

log.warn(msg1, msg2...) -- severity=4

log.notice(msg1, msg2...) -- severity=5

log.info(msg1, msg2...) -- severity=6

log.debug(msg1, msg2...) -- severity=7

print(msg1, msg2...) -- severity=6

murano¶

Namespace for murano specific functions. Introduces two ways to access services from Murano scripting to allow dynamic service call.

murano.services

local service = "config"

local operation = "listService"

murano.services[service][operation]({type = "core"})

-- or

murano.service_call(service, operation, {type = "core"})

murano.runtime_stats()

Get runtime metrics from current execution. This can be useful for development/debugging to see how close a script is to hit the CPU / Memory limits at any point.

local stats = murano.runtime_stats()

-- CPU time so far in microseconds.

local cpu_time = stats.cpu_time

-- Processing time so far in microseconds.

-- Processing time is aggregated by solution as metric for usage billing.

local processing_time = stats.processing_time

-- Current memory usage in bytes.

-- This includes all script variables and temporary objects not yet garbage collected.

local memory_usage = stats.memory_usage

-- Service call time so far in microseconds.

local service_call_time = stats.service_call_time

-- Script execution time so far in microseconds.

local clock_time = stats.clock_time

return to_json(stats) -- Return runtime stats as a json object.

Developers could also retrieve detailed service call time by adding service_calls parameter to murano.runtime_stats().

Keystore.list()

Keystore.get({key="QAQ"})

local stats = murano.runtime_stats({service_calls=true})

-- Service call count for Keystore.list

local listCount = stats["service_calls"]["Keystore.list"]["count"]

-- Total processing time for Keystore.list in microseconds.

local listTime = stats["service_calls"]["Keystore.list"]["time"]

-- Count of return code 200 for Keystore.list

-- Remark: 200 might not exist in stats["service_calls"] if Keystore.list failed.

local code200 = stats["service_calls"]["Keystore.list"]["200"]

-- Return runtime stats including statistics for service calls as a json object.

return to_json(stats)

Hot VM ¶

Murano provides Hot Virtual Machine feature which enables re-using existing scripting environment.

What it is? ¶

When enable, Scripting VMs are kept alive for later re-use When disabled, each script execution (= each event) creates a new VM. Hot VMs are kept in a pool by solution id.

Next event will take any available VMs from the pool or create new one if none are ready.

Why using it? ¶

- Speed up by reducing execution time & latency.

- No need of VM creation at each script.

- No need of loading the solution script in VM every time.

- Cost down by keeping state & avoiding unnecessary data access.

- Global variables don’t die and can be re-used in next event.

How to enable it? ¶

Every solution can enable this feature from Murano UI by checking the reuse_vm setting under the solution->Services->Config settings.

Why is it not always ON? Existing solutions could face un-expected behavior.

What to mind? ¶

- Modules are loaded only 1 time at VMs start! Make sure you don’t expect it to be run at each event execution.

- Beware of global variables leaking. To avoid Out-of-Memory issues, VMs are not re-usable if they reach 50% of the allowed memory.

- The VM & cache values won’t be there all the time! Always check if the value is in cache or not.

- Cache invalidation a hard problem. Make sure the cached values don’t change.

- Your application will have many VMs in a point in time and is not a shared state.

Example ¶

cachedvalue = cachedvalue or Keystore.get({key="value"}).value